LLM and Agent “Leaks” Are Not Edge Cases

They Are Design Signals Over the past year, a series of so-called “leaks” involving large language models (LLMs) and emerging agentic systems have captured industry attention. The most cited example is the exposure of system prompts and behavioral scaffolding behind models like Claude from Anthropic, alongside similar disclosures affecting models from OpenAI. These events have often been framed as isolated incidents or, alternatively, dismissed as overblown artifacts of jailb

Apr 8

What Is Constitutional AI?

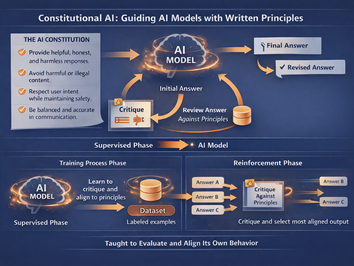

As artificial intelligence systems become more capable and more embedded in business operations, a central question continues to surface: How do you ensure these systems behave in ways that are useful, safe, and aligned with human intent? One of the more influential answers to emerge in recent years is Constitutional AI , an approach pioneered by Anthropic . How AI moves from training to the reinforcement phase in "Constitutional AI" (AI generated image) At its core, Constitu

Apr 8